Anthropomorphization in HRI Design

Anthropomorphization involves attributing human traits, emotions, or intentions to entities that are not human. It comes from the Greek words anthropos (meaning “human”) and morphe (meaning “form”) and refers to the perception of human-like qualities in non-human objects. Anthropomorphization is a common occurrence in everyday life, such as when people say things like talking to a virtual assistant like Siri or Alexa as if they were a person, thanking them or apologizing to them, or referring to a car as if it were a person, saying things like “She’s been good to me” or “He’s getting old”, or “That grater looks like it has eyes”. Pareidolia is a specific type of anthropomorphization where human-like features are perceived in random patterns or ordinary objects.

Anthropomorphization is a design technique that takes advantage of people’s tendency to see human-like characteristics in non-human objects. This technique is commonly used in human-robot interaction (HRI) design, where robots are designed to have human-like appearance, behaviour, and social cues. For example, humanoid robots like ASIMO and Nao have human body shapes, proportions, and expressions, while non-humanoid robots like Keepon and Google’s autonomous car prototype have anthropomorphic features like eyes and symmetrical bodies. Animal-like appearance and behaviour, as seen in Pleo and Roomba with a tail, are also considered a form of anthropomorphic design. Anthropomorphic design is not limited to appearance and form, as it also takes into account the robot’s behaviour in relation to the environment and other actors. This design technique allows people to see robots as social agents and to ascribe human-like traits and abilities to them.

A video depicting Asimo’s capabilities:

A video showcasing Nao’s capabilities:

A video of Keepon doing the “Harlem Shake”:

A video explaining Pleo’s basic features:

The traditional literature on anthropomorphism in engineering has mostly focused on how robots are perceived in terms of their appearance. However, recent psychological theories, particularly the framework proposed by Epley and colleagues, have expanded our understanding of anthropomorphism beyond appearance to include three core factors: effectance motivation, sociality motivation, and elicited agent knowledge. Effectance motivation refers to the desire to understand the behaviour of others as social actors, which can be activated when people are uncertain about how to interact with a robot. Sociality motivation can cause people to anthropomorphize robots as a way of addressing feelings of loneliness or lack of social connections. Elicited agent knowledge is how people use their understanding of social interactions and actors to understand robots. These three determinants shed light on why humans tend to attribute human-like traits and emotions to nonhuman entities, including robots. This can affect both social perception and behaviour towards robots. While anthropomorphization of computers and media has been demonstrated, whether it holds true for robots is still under debate. Nonetheless, the three-factor model of anthropomorphism has been validated with social robots in empirical studies.

Read more about Epley et al. theoretical framework of anthropomorphism:

https://psycnet.apa.org/doiLanding?doi=10.1037/0033-295X.114.4.864

The Uncanny Valley

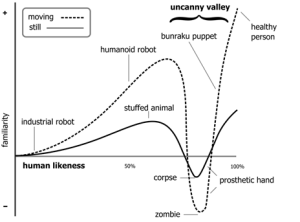

Mori (1970) proposed that the more robots resemble humans, the more people will find them likable. However, there is a threshold where robots become so realistic that people feel uncomfortable and their likability decreases sharply. This phenomenon is further exacerbated when the robots are capable of movement. The relationship between the level of anthropomorphism and likability is depicted in the following figure:

The Uncanny Valley Theory by Mori (adapted by the book Robot Interaction: An Introduction)

Read more about Mori’s Uncanny Valley theory:

https://ieeexplore.ieee.org/document/6213238

Designing anthropomorphism

Robot appearance

Anthropomorphism is perceived differently by robot designers and social scientists. Robot designers see it as a characteristic of the robot itself while social scientists view it as something that humans attribute to the robot. Anthropomorphism concerns the relationship between robot design and people’s perceptions of robots. Robot designers can trigger anthropomorphic inferences through robot appearance and behaviour. Robot appearance can be very simple, with just a few lines on a sheet of paper to suggest the human form. Anthropomorphization can be achieved with only a minimal set of humanlike features, and simple robots such as Keepon and R2D2 are effective in triggering anthropomorphization.

Robot behaviour

The behaviour of robots can also be designed to increase anthropomorphization. Simple geometric shapes moving against a white background were shown to evoke people to describe their interactions in terms of social relationships and humanlike feelings and motivations. Movement alone can communicate a surprisingly wide range of humanlike expressive behaviour. Robot builders can actively encourage anthropomorphization by increasing the reaction speed of the robot to external events. Reactive behaviour, in which the robot responds quickly to external events, is an easy approach to increase anthropomorphization.

Impact of Context

People’s perceptions of anthropomorphic robot design are often affected by contextual factors such as age, cultural background, and personality. The context in which the robot is used can support anthropomorphization, as just putting a robot in a social situation with humans seems to increase the likelihood that people will anthropomorphize it. The more humanlike the robot, the more people will expect in terms of humanlike contingency, dialogue, and other features. Workers who work alongside robots prefer them to be designed in more anthropomorphic ways, as shown by their preference for Roomba to display emotions and intentions with a dog-like tail. In conclusion, anthropomorphism is about the relationship between robot design and people’s perceptions of robots. Robot designers can exploit appearance and behaviour to achieve a more humanlike perception of the robot, and people’s expectations and contextual factors can affect their likelihood of anthropomorphizing the robot.

Measuring anthropomorphization

Researchers in human-robot interaction (HRI) not only need to recognize the prevalence of anthropomorphism of robots, but also need to determine how to measure its presence in interactions. The three-factor model of anthropomorphism suggests that humans attribute mental and emotional states that are typically human to nonhuman entities. To measure a robot’s human-likeness in form or behaviour, HRI researchers draw from existing literature on measuring humanity attribution among humans. Measures for anthropomorphism include asking participants about attributing mind or human traits to robots, assessing whether people perceive robots as capable of experiencing human emotions, intentions, or free will, and using the Godspeed questionnaire, ROSAS scale, and revised Godspeed questionnaire. These measures may rely on self-reports and questionnaires or on subtle behavioural indicators, such as language use and social norms. Enriching the range of measurements from direct to indirect approaches can improve research in social robotics and validate psychological theories.

Read more about Godspeed questionnaire:

https://link.springer.com/article/10.1007/s12369-008-0001-3

Read more about ROSAS scale:

https://dl.acm.org/doi/10.1145/2909824.3020208

Read more about the revised Godspeed questionnaire:

https://www.sciencedirect.com/science/article/pii/S0747563210001536?via%3Dihub

References

Bartneck, C. et al. (2020) Human-Robot Interaction: An Introduction. Cambridge: Cambridge University Press. Available at: https://doi.org/10.1017/9781108676649.